Disk Storage Performance Considerations

When using the on-disk storage engine of BlobCity DB, fine-tuning of storage on physical storage volumes is key. Some of the best practices to be followed are mentioned on this page.

Use XFS on Unix

The XFS file system on any Unix distribution is the best supported from a performance standpoint. ext4 is a big no due to a low inodes limit. An NTFS file format is recommended only over small volumes of data, typically less than 4 billion files per database node.

Number of files required

The DB stores data and indexes in individual files. It creates a large foot print of files when using the on-disk storage engine and it is crucial that these files are managed appropriate. Moving large number of files is a time consuming task, hence it is recommended that the storage system be designed appropriate from the start.

The DB consumes at minimum 1 file on the file system per record stored in each collection. In addition it consumes at minimum 1 file per column that is indexed within each record. It is important to plan the number of files that will be created on a single node for optimal performance. If you insert 100 files each with 10 columns, of which 2 columns are indexed, the DB would at minimum create 1 * 100 = 100 files (one per each record) and additional 100 (records) * 2 (index per record) = 200 files for the indexes. This would mean you would at minimum consume 300 files on the file system to store 100 records with 2 columns indexed.

The maximum number of files possible on a single NTFS volume is ~4 billion. The maximum number of files possible to store on a single XFS volume is ~18 quintillion. The XFS number is substantially larger and hence recommended when storing large volumes of data in a BlobCity DB cluster. These limits are on a single mount point. The DB can virtually store unlimited files in a cluster. Each node in the cluster must be pointed to an independent file system mount point.

SSD is a must for high performance

For high performance requirements, the data should be stored on SSD volumes. These could be volumes physically mounted onto the machine or attached over the network. It is recommended that over the network volumes be connected to the compute infrastructure over optical fibre cables and not regular LAN cables.

Matching file system block size

Since each record creates a file on the file system, and each file system has a minimum block size, matching the block size of the file system closest to the size of each record improves performance. When planning the file system block size, it is important to round up to the nearest block size and never round down. The below table provides examples of file system block size setting to use for different average record sizes.

| Average Record Size | File System Block Size |

|---|---|

| 200 bytes | 512 bytes |

| 520 bytes | 1024 bytes |

| 1024 bytes | 1024 bytes |

| 1025 bytes | 2048 bytes |

Different mount points per collection

Data of all collections can be stored in a single mounted volume, however it is likely that different collections have different average record sizes. A single block size on the mount point may not be desirable to store data of all collections. For this reason, the data folder of specific collections can be mounted as independent volumes to increase performance.

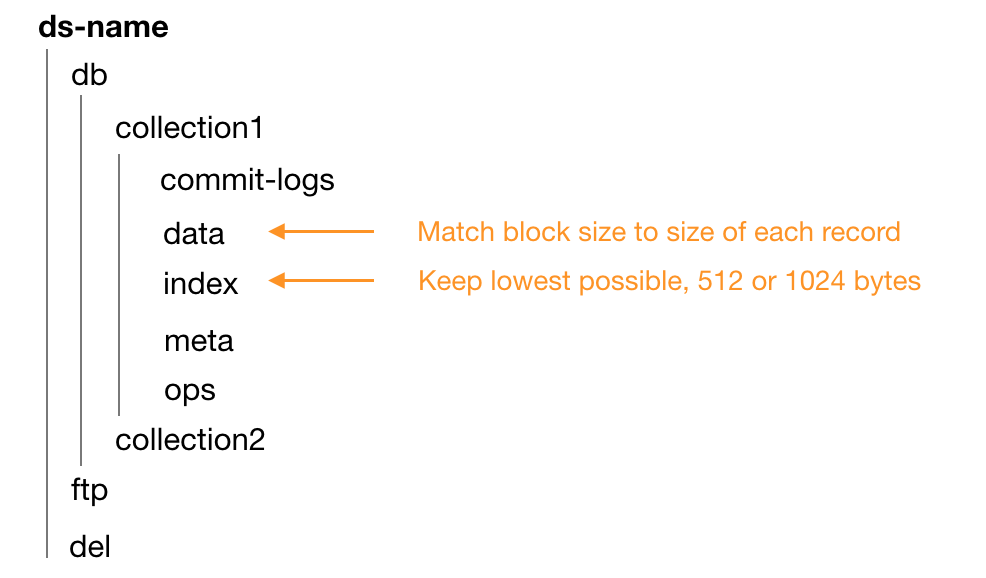

Figure 1: Different mount points for data and index folders

Different mount points for indexes

As seen in Figure 1 the data and index folders are recommended to have different block sizes. The data folder for collection1 may have different block size than data folder for collection2. However it is recommended that index folder always have the lowest block size configuration. Depending on your hypervisor and storage system setup, the lowest possible block size for an XFS volume could be either 512 or 1024.

Have the experts do it for you

BlobCity offers performance optimisation services. We did be happy to help your organisation make the most of BlobCity DB.

Updated almost 7 years ago